The AI Executive Briefing — Edition 15: Resetting the Mandate

- uzubler

- Jan 16

- 2 min read

🧭 Executive Foreword — A refreshed mandate

As we enter 2026, The AI Executive Briefing gets a sharpened mandate.

This briefing is not about explaining AI.It is about running AI — when it becomes infrastructure, when it carries risk, and when it absorbs capital.

AI has moved beyond pilots and prototypes. It now sits inside production lines, inspection systems, logistics flows, grids, and safety-critical operations. That changes who is accountable, how decisions are made, and what failure means.

This briefing exists for executives who carry that responsibility.

Same format. Same cadence.Sharper focus.

🎯 Edition Focus — From experimentation to obligation

Industrial and enterprise AI has crossed a threshold.

Once AI enters production:

Compute becomes capacity.

Power and cooling become constraints.

Assurance becomes operational.

Failure becomes a leadership issue, not a technical one.

In 2026, the question is no longer “Can we deploy AI?”It is “Can we operate it safely, economically, and at scale?”

This edition resets the objective of the briefing around that reality.

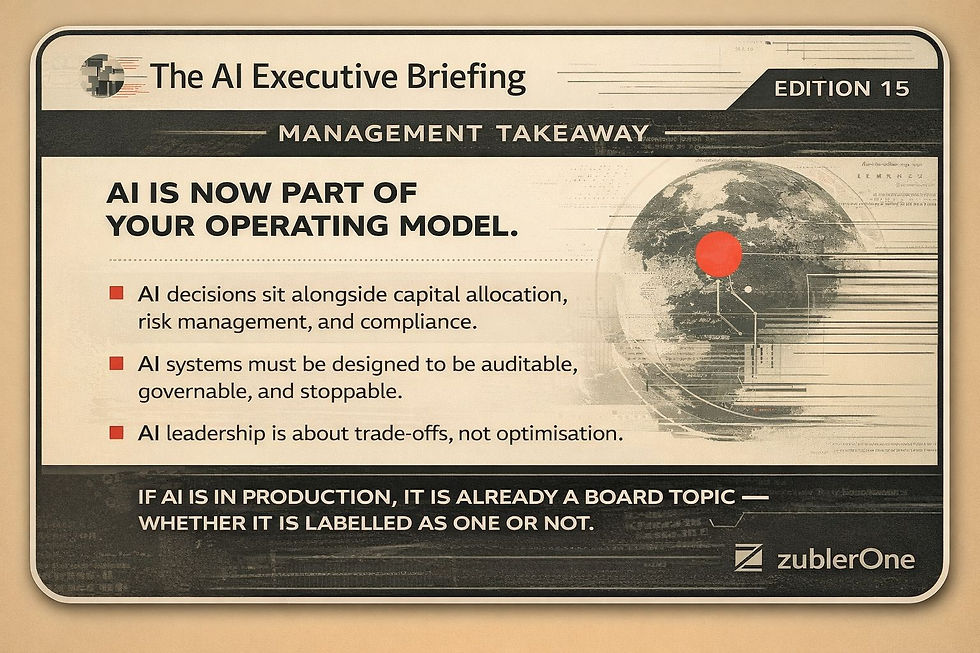

💡 Management Takeaway — The briefing’s core thesis

AI is now part of your operating model.

That means:

AI decisions sit alongside capital allocation, risk management, and compliance.

AI systems must be designed to be auditable, governable, and stoppable.

AI leadership is about trade-offs, not optimisation.

If AI is in production, it is already a board topic — whether it is labelled as one or not.

⚙️ Reality Check — What breaks first

In real deployments, the first failures are rarely the models.

What breaks:

Cost assumptions once inference runs 24/7.

Organisational clarity when incidents occur.

Accountability across vendors and internal teams.

Confidence when regulators ask for evidence, not intent.

Most organisations underestimate operational drag, not model risk.

📰 Signals & Moves — What actually matters

Regulators are shifting from principles to enforcement readiness.

Industrial players are aligning compute, energy, and data strategies.

Assurance frameworks (EU AI Act, NIST AI RMF) are moving into procurement and supplier contracts.

Edge AI is accelerating where latency, safety, and sovereignty matter.

These are not trends. They are constraints.

🧩 Best Practices — Ops-ready, not theoretical

Treat compute, power, and cooling as strategic resources.

Design AI systems for graceful failure and safe states.

Log everything — prompts, configs, data, versions.

Keep humans in the loop where accountability sits.

Standardise assurance across suppliers, not per project.

Use simulation and digital twins before deployment, not after incidents.

🧭 Management Takeaway — What leaders should do now

To run AI responsibly in 2026:

Secure compute, power, and chips like strategic assets.

Standardise assurance (EU AI Act / NIST RMF) across the ecosystem.

Push real-time inference to the edge; keep fleet learning in the cloud.

Fund digital twins early — they reduce risk and shorten time-to-value.

AI leadership is not about speed alone.It is about control at scale.

🚀 Coming Up Next — Edition 16

AI for Energy-Intensive OperationsPower, cooling, cost curves, and why AI economics now drive architectural choices.

💬 Have a real deployment insight, failure mode, or lesson learned?Comment or DM me — selected inputs will shape future editions.

🔗 Full archive on LinkedIn — follow zublerOne🧠 Deep dives at zublerOne.com

Comments